Product Updates

May 23, 2023

Improvements to Intelligems JavaScript Performance

Intelligems ongoing improvements to optimize JavaScript performance

Christian Dalton

Improving the Performance of Our App

At Intelligems, we always look for ways to improve our app's performance. In this blog post, we'll share the steps we took to identify and address performance opportunities and the specific changes we made to improve each in our latest sprint.

Identifying Opportunities

Our first step to improving our app's performance was objectively identifying potential shortcomings using Google Lighthouse as a baseline measure and Firefox Performance Profiles for in-depth analysis.

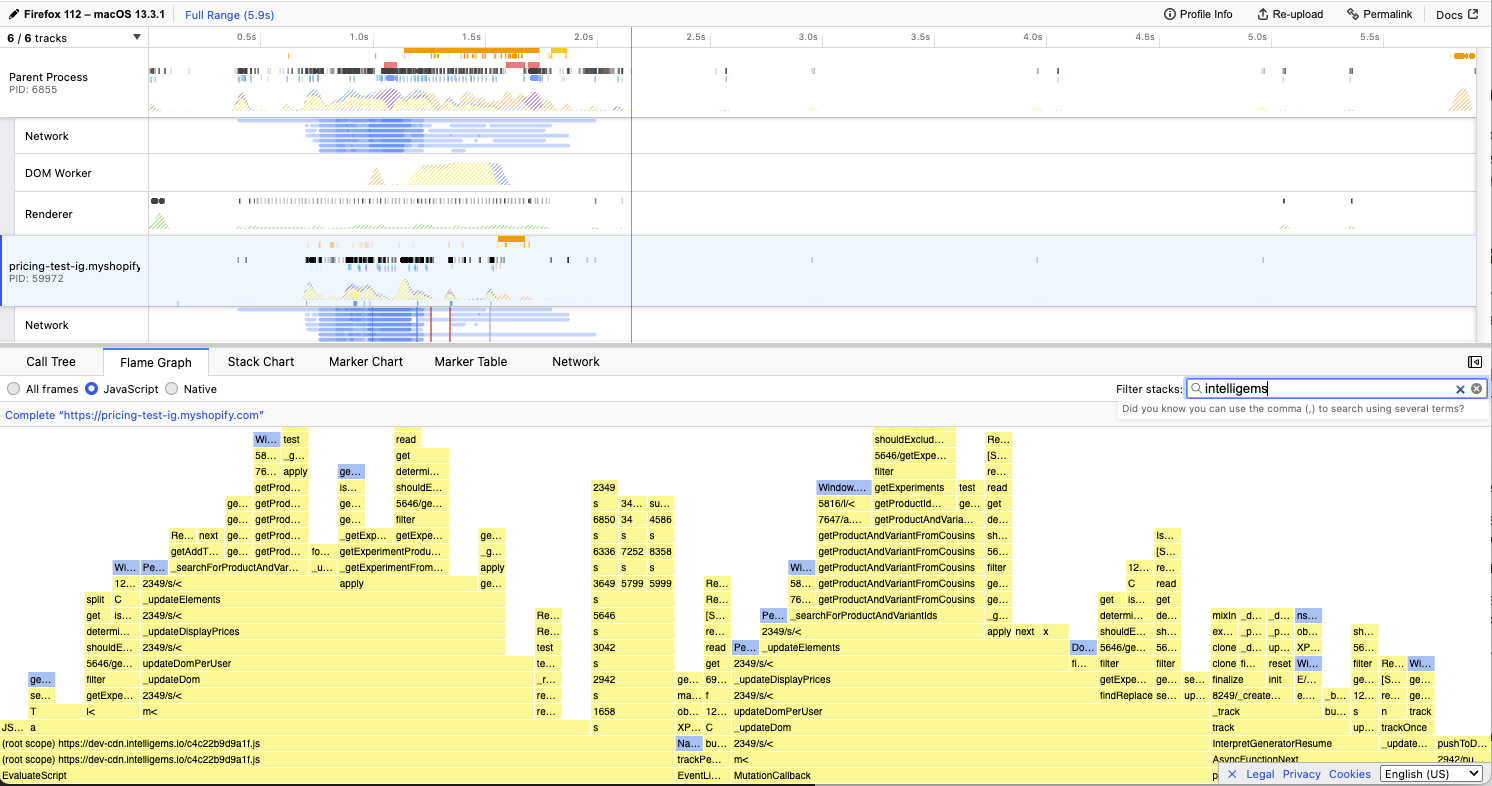

The Firefox Profiles offered many insights into how our plugin operates at scale. As we dived into each profile, we noted all areas with room for improvement and our hypotheses on improving each. Specifically, we were looking at the JavaScript Flame Graphs, filtering for “intelligems”.

While we raised many questions and ideas, the following were of particular interest:

We memoize many of our pure functions using an in-house memorization function, and these functions run many times per page load. Was our function performant, or would switching to an NPM package help? What other pure functions could we memoize?

Fetching LocalStorage and Cookies are costly operations, and our app was over-fetching from each. How could we reduce this load?

Could we debounce more of our impure functions? What tradeoffs, if any, would this have on user experience?

Was our app overly optimistically updating the page at the cost of site speed? How could we more lazily update the page without causing flickering on the site?

Improvements Made

Measuring and Improving Memoization

We originally wrote an in-house memoization function for our app long before this sprint. While this function has proven reliable, we wondered if its performance rivaled popular NPM packages that offered more robust features and potentially faster cache-read times.

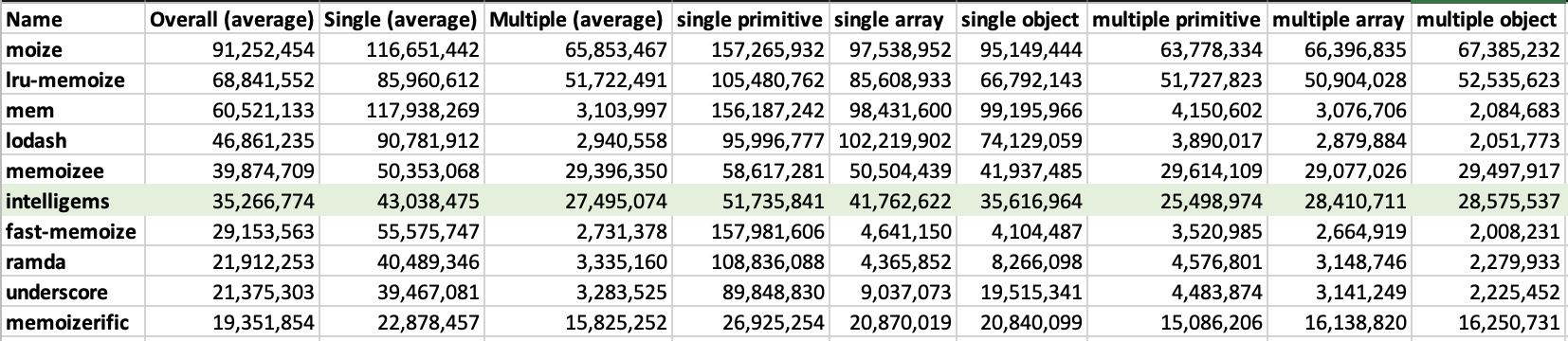

While searching memoization packages, we stumbled upon a robust benchmarking suite that compared ten popular memoization packages. We cloned this repo and added our function to the mix to see how it fared. Most memoization benchmark suites, including this one, memoize heavy functions with a small input-parameter space, such as calculating a Fibonacci number. Upon comparison, ours performed pretty well - not the best, but average or above average compared to popular packages such as lodash or underscore.

General Benchmark Scores:

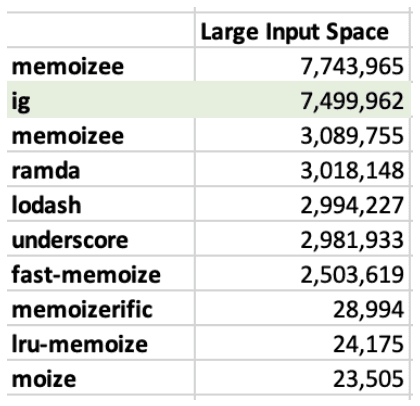

However, this benchmarking suite needed a benchmark critical to our use case: memoizing functions with high input-parameter spaces. This functionality is vital because many of our functions take Shopify Product and Variant IDs as input parameters; in other words, the memoized cache needs to scale with many permutations of these IDs performantly. This benchmark had surprising results - our memoization function drastically outperformed the competing packages.

Large Cache Benchmark Scores:

Since this was our most important benchmark, we kept our in-house function rather than swap to a memoization package. A nice win for us! With this decision made, we then combed through our codebase and added additional memoization, as appropriate, to other pure functions.

Fetching LocalStorage and Cookies

The solution here was straightforward: our app generally only cares about the state of the keys we modify, and we are not concerned with third parties changing these stores. Therefore, we can wrap all calls to LocalStorage and Cookies in a class that memoizes the initial fetch per key and clears the memoized response when we push updates.

An important note — this model only works for cookies that we are solely responsible for setting the value. However, Cookies set or updated by other parties may change without an indication. We used lodash.throttle to limit the number of calls to the same cookie in a fixed period.

This small change drastically reduced our calls to LocalStorage and Cookies and immediately improved our app’s performance - an easy and effective win.

Mutation Observer Optimizations

Our app needs to update visitors' product prices such that no flickering occurs. Before this analysis, we accomplished this by attaching a Mutation Observer as soon as our script loaded. However, our Firefox Performance Profile analysis showed us we were too optimistic about this attachment. We found that, instead, attaching the Mutation Observer at First Paint offered a similar user experience while further reducing Total Blocking Time and increasing Lighthouse Scores.

Results

Performance monitoring will be an ongoing project throughout the life of our app. The pendulum will likely swing between adding new features and pulling back to analyze and optimize them as necessary. With that said, this sprint has offered a few dramatic improvements to our app, and we now have monitoring in place to ensure we are set up for ongoing speed.

Product Updates

Ecommerce Strategy

AB Testing