AB Testing

Jul 12, 2022

Split Traffic

Why you should be splitting traffic when A/B testing

Drew Marconi

A/B testing is a powerful e-commerce technique that helps operators gain confidence in what changes will help their business the most. A/B testing is at the core of every product manager’s toolkit when rolling out new features, and sophisticated marketers are often performing A/B tests to find the most effective creative assets and audiences to target.

So, quick refresher on A/B testing: A/B testing is an experimental technique in which participants (or customers) are split into separate groups and exposed to different “treatments.” “Treatments” in this context can refer to anything about an experience: what color a button on the site is, how many options a page loads, or even what price a product sells for (that last one is Intelligems’ specialty!).

Say you sell scented candles for $30, but you wonder whether selling them for $35 might make you more profit in the long run. An A/B test might show half of your site traffic the $35 price, while the other half of your traffic would see the $30 price. You could measure each group’s purchasing patterns to decide whether the $35 really is better for your bottom line. A/B test complete!

A/B Testing is Hard

For an A/B test to be “perfect,” you need a clean, controlled test environment. You want each “subject” (ie, someone visiting your site) to see exactly one treatment, and you want your groups to be randomly split so that there’s no bias in either group. Basically, your test needs to be as clean as this:

But this is real life, and getting a perfect test environment right is far from easy.

Suppose you’re testing green versus blue “Order Now” buttons for those scented candles. When a customer first comes to your website and gets assigned to the blue treatment, it’s crucial that she continues to see that blue button during future visits. Unfortunately, it’s challenging to recognize her if she moves from her phone to her laptop. Similarly, switching web browser (e.g. Chrome to Safari) or clearing cookies makes it difficult to recognize a customer and keep their treatment consistent. But if she ends up seeing both, we can’t say whether the blue or the green button did a better job of pushing her towards buying the candle!

Not only is it difficult to keep groups separate, it’s just as hard to separate groups fairly to begin with.

Suppose you want to run an A/B test using two TV ads. Since we can’t split TV viewers by individual devices, we have to split by geographical region. Advertisers often run into this problem of creating equivalent geographic groups according to all relevant criteria such as age, race, income, and more. If our two groups aren’t equal, then whatever difference we see in their behavior might not be due to our different ads; instead, it might just be because the groups were already different to begin with.

A Tempting Alternative - Pre/Post Testing

Clearly, A/B testing is hard and sometimes not feasible, so analysts will often turn to A/B testing’s ugly step sibling: pre/post analysis. Pre/post analysis is similar to A/B testing in that it tests two versions of a treatment, but instead of running those in parallel, a pre/post test runs one version on all traffic, and then the other. Going back to the scented candle example, this setup would mean I might see the $35 price one week, and the $30 price the next week.

Confounding factors in pre/post testing

This structure is not ideal for a bunch of reasons. First and foremost, the world changes over time, so the context around your experiment might change between your treatments. I might be more likely to buy the $30 candle than the $35 candle, but is that because the $5 difference matters that much to me, or is it because your biggest competitor happened to run out of stock right around when you switched prices? Maybe it’s because a TikTok video with one of your candles went viral. Or maybe you switched prices right before the peak time for Valentine’s Day gifts! So your underlying “natural” rate of conversion could be wildly different from week 1 to week 2.

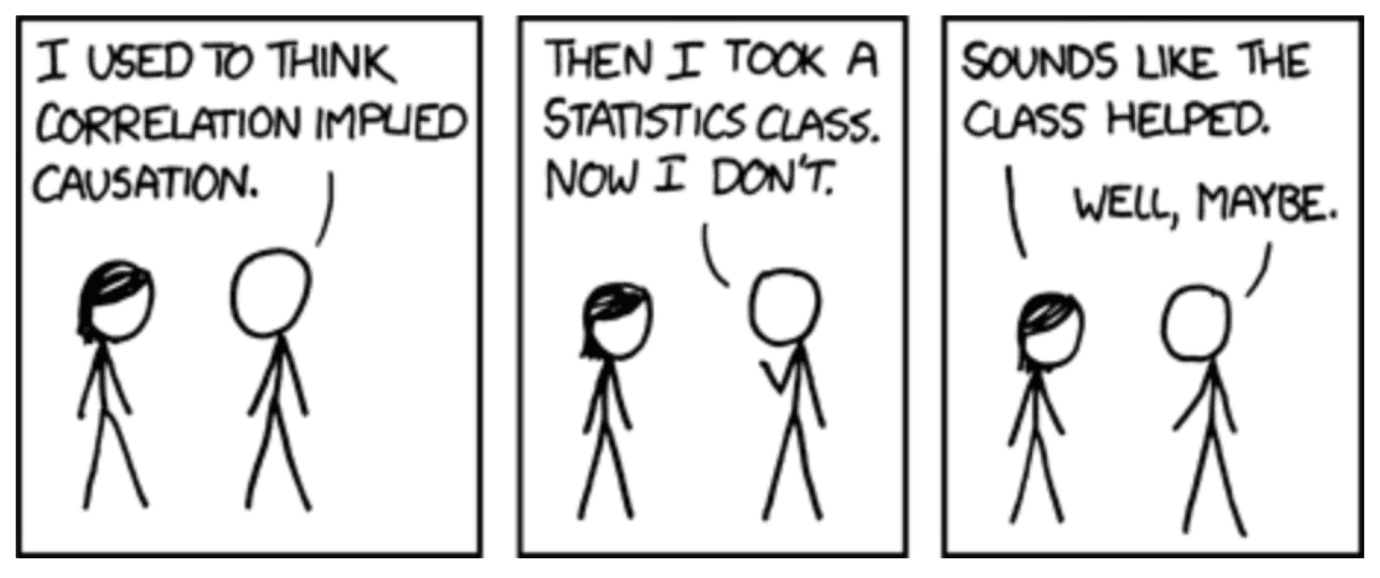

This all comes back to the idea of confounds, the bane of any hypothesis tester. When I run an experiment, I’d like the difference between two groups to reach statistical significance so that I can confidently say that my treatment caused the observed change in behavior. Confounds throw a wrench in that last part; there might be a statistically significant difference, but we observed the two treatments at different times, so we can’t be sure it was us and our price change that caused the difference.

The Benefits of Split AB Testing

Notice how true “split” A/B testing absolves us of that problem! Whatever changes were happening in the world as our experiment was running, they happened to both groups at the same time. Ultimately, the only systematic difference between the two groups was the price of that scented candle, so if our results are statistically significant, we can take credit for making that difference happen.

Split A/B testing is harder to implement, but so much more trustworthy that the juice is worth the squeeze. If you want to run that scented candle test, a similar A/B test on something you actually sell, or any kind of A/B test on pricing, promotions, or shipping costs, reach out to Intelligems or check us out on the Shopify App Store! We look forward to helping you design the best A/B test possible!

AB Testing

Ecommerce Strategy

Analytics